Apple’s Renewable Energy

Apple’s attention to the details of its environmental impacts has become one of the best things about the company. They are in a position to have a substantial impact and they are pushing forward constantly. The latest news is that the Apple now globally powered by 100 percent renewable energy. But even better, they are getting their suppliers into clean energy and as of today nine more of its suppliers have committed to 100% clean energy production.

“We’re committed to leaving the world better than we found it. After years of hard work we’re proud to have reached this significant milestone,” said Tim Cook, Apple’s CEO. “We’re going to keep pushing the boundaries of what is possible with the materials in our products, the way we recycle them, our facilities and our work with suppliers to establish new creative and forward-looking sources of renewable energy because we know the future depends on it.”

Apple’s new headquarters in Cupertino is powered by 100 percent renewable energy, in part from a 17-megawatt onsite rooftop solar installation.

Apple and its partners are building new renewable energy projects around the world, improving the energy options for local communities, states and even entire countries. Apple creates or develops, with utilities, new regional renewable energy projects that would not otherwise exist. These projects represent a diverse range of energy sources, including solar arrays and wind farms as well as emerging technologies like biogas fuel cells, micro-hydro generation systems and energy storage technologies.It goes without saying that all companies should follow Apple’s lead.

Roundup of recent articles and podcasts

We’ll start with MacStories which has been very busy and churning out articles I’ve really enjoyed.

Most recently, Federico Viticci hit on a topic that I also recently wrote about. Of course, his article is of much greater length and detail (when are his articles not of great length and detail?). His article, Erasing Complexity: The Comfort of Apple’s Ecosystem is an excellent read:

There are two takeaways from this story: I was looking for simplicity in my tech life, which led me to appreciate Apple products at a deeper level; as a consequence, I've gained a fresh perspective on the benefits of Apple's ecosystem, as well as its flaws and areas where the company still needs to grow.After a couple of years experimenting with lots third party hardware and apps he’s simplifying:

But I feel confident in my decision to let go of them: I was craving the simplicity and integration of apps, services, and hardware in Apple's ecosystem. I needed to distance myself from it to realize that I'm more comfortable when computers around me can seamlessly collaborate with each other.I’ve never gone to the lengths that he has. I don’t have the money, time or the inclination for such far ranging experimentations, be they apps or hardware. But I’ve dipped my toes in enough to know that constant experimentation with new apps takes away from my time doing other things. At some point experimentation becomes a thing unto itself which is fine if that’s something one enjoys. I think many geeks fall into this.

His conclusion is spot on:

It took me years to understand that the value I get from Apple's ecosystem far outweighs its shortcomings. While not infallible, Apple still creates products that abstract complexity, are nice, and work well together. In hindsight, compulsively chasing the "best tech" was unhealthy and only distracting me from the real goal: finding technology that works well for me and helps me live a better, happier life.This tech helps us get things done. It is a useful enhancement but it is not the end goal.

A week or so ago Apple announced an upcoming event for March 27, centered on education and taking place in Chicago. There’s a lot they can do in this area but they haven’t provided much detail about the event so of course there’s been LOTS of speculation. John Voorhees of MacStories has a fantastic write-up of his expectations based on recent history in the education tech area as well as Apple’s history in education. He think’s the event will “Mark a milestone in the evolution of it’s education strategy”:

However, there’s a forest getting lost for the trees in all the talk about new hardware and apps. Sure, those will be part of the reveal, but Apple has already signaled that this event is different by telling the world it’s about education and holding it in Chicago. It’s part of a broader narrative that’s seen a shift in Apple’s education strategy that can be traced back to WWDC 2016. Consequently, to understand where Apple may be headed in the education market, it’s necessary to look to the past.It’s a great read. The event is this week so we’ll know more soon.

With the topic of Apple and education there’s been a lot of talk about Google’s success with Chromebooks in education. As the story goes, many schools have switched because the Chromebooks are cheap, easy to manage and come with free cloud-based apps that teachers (and school staff) are finding very useful. Another one of my favorite Apple writers is Daniel Eran Dilger over at Apple Insider and he’s got a great post challenging the ongoing narrative that Apple in dire straights in regards to the education market. Specifically the current popular idea that Apple should drop it’s prices in a race to the bottom with companies that sell hardware for so little that they’re making little to no profit. How is “success” measured in such spaces? Dilger covers a lot of ground and it’s worth a read in terms of having more context, current and historical, for that market area. He’s got another recent post about Google’s largely failed attempt at entering the tablet market in general. Google gives up on tablets: Android P marks an end to its ambitious efforts to take on Apple’s iPad

Rene Ritchie over at iMore continues to do a fantastic job both in his writing and podcasting. His recent interview with Carolina Milanesi on the subject of Apple and education is excellent. It’s available there as audio or transcript. I found myself agreeing with almost everything I heard. Carolina recently posted an excellent essay on tech in education over at Tech.pinions..

One thing in particular that I’ll mention here: iWork. I love the iWork apps and have used them a lot over the years. That said, I agree with the sentiment that they are not updated nearly enough. I would love for Apple to put these apps up higher in the priority list. Would be great to see the iPad versions finally get brought up to par with the Mac versions.

Rene also did another education related podcast interview, this one with Bradley Chambers who’s day job is Education IT.

Since HomePod I’ve done a bit of thinking about how I use the iOS ecosystem: touch, typing, voice, and Siri. Apple’s created very flexible and powerful interconnections between these devices resulting in a pretty fantastic experience. Siri and the iOS Mesh

Siri and the iOS Mesh

Over the past couple years it’s become a thing, in the nerd community, to complain incessantly about how inadequate Siri is. To which I incessantly roll my eyes. I’ve written many times about Siri and it’s mostly positive because my experience has been mostly positive. Siri’s not perfect but in my experience Siri is usually a pretty great experience. A month ago HomePod came into my house and so I’ve been integrating it into my daily flow. I’d actually started a “Month with HomePod” sort of post but decided to fold it into this post because something shifted in my thinking about it over the past day and it has to do with Siri and iOS as an ecosystem.

It began with Jim Dalrymple‘s post over at The Loop: Siri and our expectations. I like the way he’s discussing Siri here. Rather than just complain as so many do he’s breaking it down in terms of expectations per device and the resulting usefulness and meeting of expectations. To summarize, he’s happy with Siri on HomePod and CarPlay but not iPhone or Watch. His expectations on the phone and watch are higher and they are not met to which he concludes: “It seems like such a waste, but I can’t figure out a way to make it work better.”

As I read through the comments I came to one by Richard in which he states, in part:

"I’ve improved my interactions with Siri on both my iPhone 8 and iPad Pro by simply avoiding “hey Siri” and instead, holding down the home button to activate it. Not sure how that’s done on an iPhone X but no doubt there’s a way....Since getting the HomePod I’ve reserved “Hey Siri” for that device and the watch. My iPads and iPhone are now activated via button and yes, it seems better because it’s more controlled, more deliberate and usually in the context of my iPad workflow. In particular I like the feel of activating Siri with the iPad and the Brydge keyboard as it has a dedicated Siri key on the bottom left of the keyboard. The interesting thing about this keyboard access to Siri is that it it feels more instantaneous.A lot of folks gave up on Siri when it really sucked in the beginning and like you, I narrowed my use to timers and such. But lately I’m expanding my use now that I’ve mostly dumped “hey Siri” and am getting much better results. Obviously “hey Siri” is essential with CarPlay but it works well there for some odd reason."

Siri is also much faster at getting certain tasks done on my screen than tapping or typing could ever would be. An example, searching my own images. With a tap and a voice command I’ve got images presented in Photos from whatever search criteria I’ve presented. Images of my dad from 2018? Done. Pictures of dogs from last month? Done. It’s much faster than I could get by first opening the Photos app and then tapping into a search. Want to find YouTube videos of Stephen Colbert? I could open a browser window and start a search which will load results in Bing or type in YouTube and wait for that page to load then type in Stephen Colbert and hit return and wait again. Or, I can activate Siri and say “Search YouTube for Stephen Colbert” which loads much faster than a web page then I can top the link in the bottom right corner to be taken to YouTube for the results.

One thing I find myself wishing for on the big screen of the iPad is that the activated Siri screen be just a portion of the screen rather than a complete take-over of the iPad. Maybe a slide-over? I’d like to be able to make a request of Siri and keep working rather than wait. And along those lines, if Siri were treated like an app allowing me to go back through my Siri request history. The point here is that Siri isn’t just a digital assistant but is, in fact, an application. Give it a persistent form with it’s own window that I can keep around and I think Siri would be even more useful. Add to that the ability to drag and drop (that would come with it’s status as an app) and it’s even better.

Which brings me to voice and visual computing. Specifically, the idea of voice first computing as it relates to Siri, HomePod and others such as Alexa, Google, etc. After a month with HomePod (and months with AirPods) I can safely say that while voice computing is a nice supplement to visual for certain circumstances, I don’t see it being much more than that for me anytime soon, if ever. As someone with decent eyesight and who makes a living using screens, I will likely continue spending most of my days with a screen in front of me. Even HomePod, designed to be voice first, is not going to be that for me.

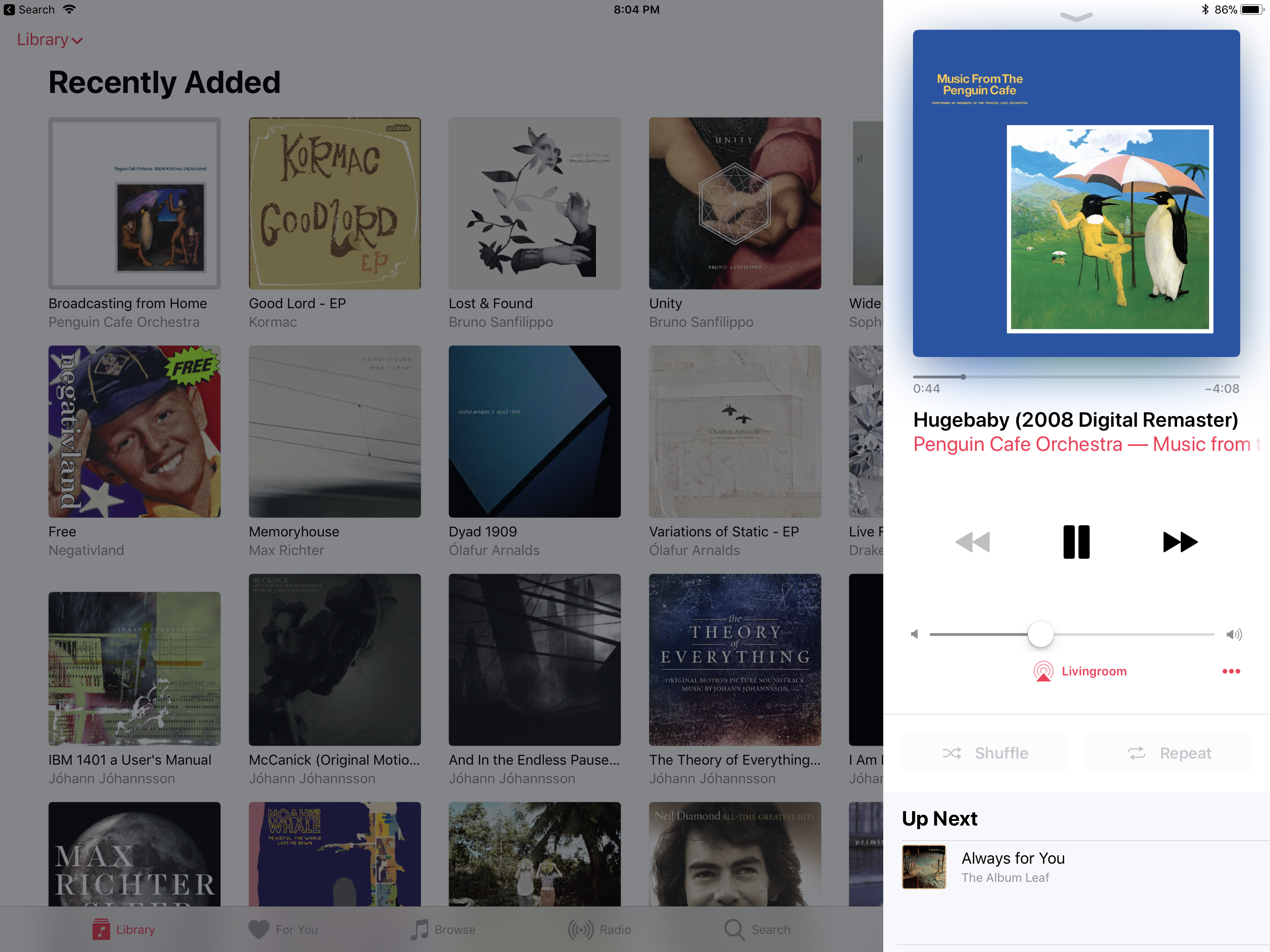

I recently posted that with HomePod as a music player I was having issues choosing music. With an Apple Music subscription there is so much and I’m terrible at remembering artist names and even worse at album names. It works great to just ask for music or a genre or recent playlist. That covers about 30% of my using playing. But I often want to browse and the only way to do that is visually. So, from the iPad or iPhone I’m usually using the Music app for streaming or the remote app for accessing the music in my iTunes library on my MacMini. I do use voice for some playback control and make the usual requests to control HomeKit stuff. But I’m using AirPlay far more than I expected.

Using the Music app and Control Center from iPad or iPhone is yet another way to control playback.

Apple has made efforts to connect our devices together with things such as AirDrop and Handoff. I can answer a call on my watch or iPad. At this point everything almost always remains in constant sync. Moving from one device to another is almost without any friction at all. What I realize now is just how well this ecosystem works when I embrace it as an interconnected system of companions that form a whole. It works as a mesh which, thanks to HomeKit, also includes lights, a heater, coffee maker with more devices to come in the future. An example of this mesh: I came in from a walk 10 minutes ago and I was streaming Apple Music on my phone, listening via AirPods. When I came inside I tapped the AirPlay icon to switch the audio output to HomePod. But I’m working on my iPad and can control the phone’s playback via Apple Music or Control Center on the iPad or, if I prefer, I can speak to the air to control that playback. A nice convenience because I left the phone on the shelf by the door whereas the iPad is on my lap.

At any given moment, within this ecosystem, all of my devices are interconnected. They are not one device but they function as one. They allow me to interact visually or with voice with different iOS devices in my lap or across the room as well as with non-computer devices in HomeKit which means I can turn a light off across the room or, if I’m staying late after a dinner at a friends house, I can turn on a light for my dogs from across town.

So, for the nerds that insist that having multiple timers is very important, I’m glad that they have Alexa for that. I’m truly happy that they are getting what it is they need from Google Assistant. As for myself, well, I’ll just be over here suffering through all the limitations of Siri and iOS.

The AR-15 is Different

Radiologist Heather Sher, writing for The Atlantic:

In a typical handgun injury that I diagnose almost daily, a bullet leaves a laceration through an organ like the liver. To a radiologist, it appears as a linear, thin, grey bullet track through the organ. There may be bleeding and some bullet fragments.I was looking at a CT scan of one of the victims of the shooting at Marjory Stoneman Douglas High School, who had been brought to the trauma center during my call shift. The organ looked like an overripe melon smashed by a sledgehammer, with extensive bleeding. How could a gunshot wound have caused this much damage?

The reaction in the emergency room was the same. One of the trauma surgeons opened a young victim in the operating room, and found only shreds of the organ that had been hit by a bullet from an AR-15, a semi-automatic rifle which delivers a devastatingly lethal, high-velocity bullet to the victim. There was nothing left to repair, and utterly, devastatingly, nothing that could be done to fix the problem. The injury was fatal.

Update: Asha Rangappa:

This is a must-read. It illustrates why the NRA is so reluctant to allow the CDC to research gun violence as a public health issue: The facts would be devastating.

This is craziness.

About American Violence

We, U.S. citizens, have given our approval to our gun problem because we refuse to force “our” government to do our bidding. We post our outrage on social media (hi!!) but we will not do what must be done because it requires getting off our asses and into streets and gov offices.

Ultimately, it is either a government of and by the people or it is not. Generally speaking it is not. We pretend it is a form of “democracy” but we know it’s not. It’s a farce run by the wealthy. We KNOW this. But to change it would be frightening and difficult-we are complicit.

When we are willing to shut down the country, for real, SHUT IT DOWN with tactics such as general strikes then change will happen. When we occupy DC and shut it down we will have change. But it won’t happen because not only is our gov broken, we are broken. And scared. And lazy.

The hell we’ve created is made stable by our apathy. It’s foundation is our apathy. It’s violence is our apathy. So when these things happen we should go to the nearest mirror if we want to see who it is that’s responsible.

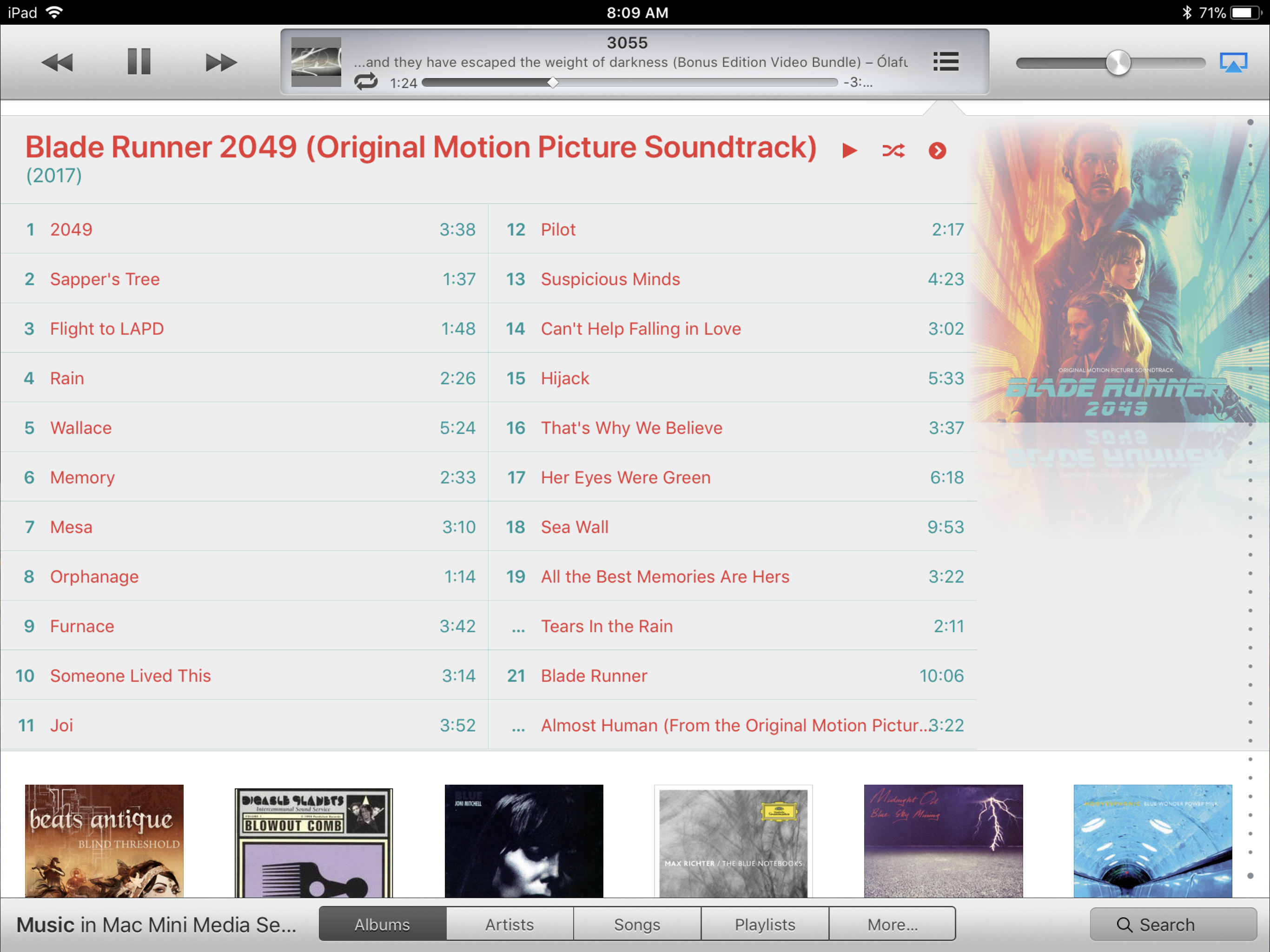

Revisiting iTunes with HomePod

I’ve been enjoying my old music along with the new but using iTunes on my Mac rather than streaming via Apple Music. AirPlay works great from Mac to HomePod, the old Remote app still works pretty well on iPad/iPhone (Even with the dated interface!)

Revisiting iTunes with HomePod

Like many I’ve been using iTunes since it’s first versions. Over the past year that use dwindled a great deal as my music playing was mostly via Music on an iOS device. And in the couple years before that I’d been using Plex on iOS devices and AppleTV to access my iTunes library on the Mac because, frankly, the home sharing was pretty crappy. Alternatively, I would also use the remote app on an iOS devices to control iTunes on the Mac which also worked pretty well. The downside was that I didn’t have a decent speaker. I alternated between various (and cheap) computer and/or bluetooth speakers and the built-in TV speakers. None of them were great but they were tolerable. I live in a fairly small space, a “tiny house” so even poor to average speakers sound okay.

Today I’ve got the HomePod and after a year of enjoying Apple Music on iPads and iPhone I’ve added lots of music that I usually just stream, often from my recently played or heavy rotation lists. But two things have surfaced now that I’ve been using HomePod for a few days. First, as I mentioned in my review of HomePod, I’m not very good at choosing music without a visual cue. Second, I live in a rural location and when my satellite data allotment runs out streaming Apple Music becomes less dependable. Sometimes it’s fine. Sometimes not. Such was the case last night. So, after a year away from the iTunes library on my Mac I opened the Apple Remote app. I set the output for iTunes to the HomePod and spent some time with my “old” music all streaming to the best speaker I’ve ever owned. So nice.

This morning it occurred to me that while I’m back on my “bonus” data (2am-8am) I should consider downloading some of the music I’ve added to my library over the past few months of discovery through Apple Music. And in looking at that list I see all that with each month or two, as I’ve discovered new music the previous new discovery’s roll out of my attention span. There are “new” things I discovered 5 months ago that I enjoyed but then forgot. It’s a great problem to have! So I’ve spent the morning downloading much of the music I’ve added to my library over the past year. I never intended to actually download any of it as the streaming has worked so well. But with the HomePod I see now that keeping my local iTunes/Music library up-to-date has great benefit.

So, how well does this new music playing process work? I rarely touch my Mac. It’s a server and I use it for a few design projects that I cannot do on my iPad. So, as mentioned above, I’ve been using the original iOS “Remote” app which opens up an iTunes like interface and which works very well to choose music on the Mac which plays via AirPlay to HomePod. Of course I can still use Siri on the HomePod to do all of its normal features. The only thing I do on the Remote app is choose the music. Apple’s not done much with the interface of that app so it looks pretty dated at this point. Actually, it looks very much like iTunes but a slightly older version of iTunes. But even so being able to easily browse by albums, artists, songs, playlists is very comfortable. It fits a little better to my lifelong habit of choosing music visually.

Why not just access my local iTunes music via the Home Sharing tab in Apple Music app on my iPad or iPhone which could then be AirPlayed to the HomePod? I guess this would be the ideal as it would allow me to stay in the Apple Music app. Just as the Remote app allows for browsing my Mac’s music library so too does the Music app. But performance is horrendous. When I click the Home Sharing tab and then the tab for my Mac Mini I have to wait a minimum of a minute, sometimes more for the music to show up. Sometimes it never shows up. If I tap out of the Home Sharing library I have to wait again the next time I try to view it. It’s terrible. By contrast, the Remote app loads music instantly. There is, at most, a second of lag.

But what’s even worse is that the Music app does not even show any of the Apple Music I’ve downloaded to my local iTunes library. It really is a terrible experience and I’m not sure why Apple has done it this way. So, the Remote app wins easily as it actually let’s me play all of my new music and does so with an interface that updates instantly even if it is dated.

I suspect that my new routine will be to use Apple Music and discovery via Apple’s playlists and suggested artists when I’m out walking which is usually a minimum of an hour a day. My favorite discoveries will get downloaded to my local library and when at home I’ll spend more time accessing my iTunes library via the remote app. All in all, I suspect that I’ll be enjoying more of my library, old and new, with this new mix and of course, all of it on this great new speaker!

HomePod: Sometimes great, sometimes just grrrrrrrrrrr.

This is the kind of device that I want to have. I’m glad I have it. I enjoy it immensely. It is a superb experience until it isn’t which is when I want to throw it out a window. Hey Apple, thanks?

I’m so confused. 😬

HomePod: Sometimes great, sometimes just grrrrrrrrrrr.

Tuesday Morning I’m getting out of bed as two dogs eagerly await a trip outside which they know will be followed by breakfast. I ask Siri to play the Postal Service. She responds: “Here you go” followed by music by the Postal Service. The music is at about 50% volume. Nice. But in three full days of use I’m feeling hesitant about HomePod and the Siri within. And the next moment illustrates why. I slip on my shoes and jacket and ask Siri to Pause. The music continues. I say it louder and the iPhone across the room pipes up: “You’ll need to unlock your iPhone first.” I ignore the iPhone and look directly at HomePod (5 feet away) and say louder as I get irritated “Hey Siri, pause!” Nothing. She does not hear me (maybe she’s enjoying the music?). By now, the magic is long gone and is replaced by frustration. I raise my voice to the next level which is basically shouting and finally HomePod responds and pauses the music. Grrr.

I go outside with my canine friends and upon return ask Siri to turn off the porch light. The iPhone across the room responds and the light goes off. I ask her to play and the HomePod responds and the Postal Service resumes. I get my coffee and iPad and sit down to finish off this review. I lay the iPhone face down so it will no longer respond to Hey Siri. Then I say, Hey Siri, set the volume to 40%. Nothing. I say it louder and my kitchen light goes off followed by Siri happily saying “Good Night Enabled”. Grrrrrrrrrrrr. I say Hey Siri loudly and wait for the music to lower then say “set the Kitchen light to 40%” and she does. The music resumes and I say Hey Siri and again I wait then I say “Play the Owls” and she does. I’d forgotten that I also wanted to lower the volume. But see how this all starts to feel like work? There’s nothing magical or enjoyable about this experience.

Here’s what I wrote Sunday morning as I worked on this review:

“When I ordered the HomePod I had no doubt I would enjoy it. Unlike so many that have bemoaned the missing features I was happy to accept it for what Apple said it was. A great sounding speaker with Apple Music and Siri. Simple.I then proceeded to write a generally positive review which is below and which was based on my initial impressions based on 1.5 days of use. By Monday I’d edited to add in more details, specifically the few failures I’d had with Siri and the frustration of iPhone answering when I didn’t want it to.It really is that simple. See how I did that? Apple offered the HomePod and I looked at the features and I said yes please.

I went into the HomePod expecting a very positive experience. And it’s mostly played out that way. But it’s interesting that by Tuesday morning my expectation of failure and frustration have risen. Not because HomePod is becoming worse. I’d say it’s more about the gradual accumulation of failures. They are the exception to the rule but happen often enough to create a persistent sense of doubt.

Set-up As has been reported. It’s just like the AirPods. I was done in two minutes. I did nothing other than plug it in and put my phone next to it. I tapped three or four buttons and entered a password. Set-up could not possibly be any easier.

Siri In a few days of use I’m happy to report that HomePod has performed very well. In almost every request I have made Siri has provided exactly what I asked. My hope and expectation would be that Siri on HomePod would hear my requests at normal room voice. While iPad and iPhone both work very well, probably at about 85% accuracy I have to be certain to speak loudly if I’m at a distance. Not a yell1, but just at or above normal conversational levels. With HomePod on a shelf in my tiny house, Siri has responded quickly and with nearly 100% accuracy and that’s with music playing at a fairly good volume. Not only do I not have to raise my voice, I’ve been careful to keep it at normal conversational tones or slightly lower. I’ll say that my level is probably slightly lower than what most people in the same room would easily understand with the music playing.

For the best experience with any iOS device I’ve learned not to wait for Siri. I just say Hey Siri and naturally continue with the rest of my request. This took a little practice because earlier on I think Siri required a slight pause or so it seemed. Not any more. But there’s no doubt, Siri is still makes mistakes even when requesting music which is supposedly her strongest skill set.

The first was not surprising. I requested music by Don Pullen, a jazz musician that a friend recommended. I’d never listened to him before and no matter how I said his name Siri just couldn’t get it. She couldn’t do it from iPhone or iPad either. Something about my pronunciation? I tried, probably 15 times with no success. I did however discover several artists with names that sound similar to Don Pullen. I finally turned on type to Siri and typed it in and sure enough, it worked. I expect there are other names, be they musicians or things outside of Music that Siri just has a hard time understanding. I’ve encountered it before but not too often. The upside, the next morning I requested Don Pullen and Siri correctly played Don Pullen. Ah, sweet relief. A sign that she is “learning”?

Another fail that seems like a learning process for Siri, the first time I requested REM Unplugged 1991/21: The Complete Sessions she failed because I didn’t have the full name. I just said REM Unplugged and she started playing a radio station for REM. When I said the album’s full name it worked. I went back a few hours later and just said REM Unplugged and it worked. Again, my hope is that she learns what it is I’m listening to so that in the future a long album name or a tricky artist name will not confuse her. Will see see how it plays out (literally!).

Yet another failure, and this one really surprised me. I’ve listened to the album “Living Room Songs” by Olafur Arnalds quite a bit. I requested Living Room Songs and she began playing the album Living Room by AJR. Never heard of it, never listened to it. So, that’s a BIG fail. There’s nothing difficult about understanding “Living Room Songs” which is an album in my “Heavy Rotation” list. That’s the worst kind of fail.

One last trouble spot worth mentioning. I have Hey Siri turned on on both my iPhone and Apple Watch. Most of the time the HomePod picks up but not always. On several occasions both the phone and watch have responded. I’ve gotten in the habit of keeping the phone face down but I shouldn’t have to remember to do that. I definitely see room for improvement on this.

I’ve requested the other usual things during the day with great success: the latest news, played the most recent episode of one of my regular podcasts, gotten the weather forecast, current temperature, sent a few texts, used various Homekit devices, checked the open hours of a local store and created a few reminders. It all worked the first time.

There were a couple of nice little surprises. In changing the volume, it’s possible to just request that it be “turned up a little bit” or “down a little bit”. I’m guessing that there is a good bit of that natural language knowledge built in and we only ever discover it by accident. Also, I discovered that when watching video on the AppleTV, if the audio is set to HomePod, Siri works for playback control so there’s no need for the Apple remote! This works very well. Not only can Siri pause playback but fast forward and rewind as well.

Audio Quality Of course Apple has marketed HomePod first and foremost as a high quality speaker, a smart Siri speaker second. I agree with the general consensus that the audio quality is indeed superb. For music and as a sound system for my tv, I am very satisfied. My ears are not as well tuned as some so I don’t hear the details of the 3D “soundstage” that some have described. I subscribe to Apple Music so that’s all that matters to me and it works very well. Other services or third party podcast apps, can be played from a Mac or iOS device via AirPlay to HomePod. I also use Apple’s Podcast app (specifically for the Siri integration) so it’s not an issue for me.

Voice First: Tasks and Music The idea of voice first computing has caught on among some in the tech community who are certain that it is the future. I certainly have doubts. Even assuming perfect hardware that always hears perfectly and parses natural language requests perfectly (we’re not there yet) I certainly have problems with the cognitive load of voice computing. I’ll allow that it might just be a question of retraining our minds for awhile. It’s probably also a process of figuring out which things are better suited for voice. Certain tasks are super easy and tend to work with Siri via whatever device. This is the list of usual things people are doing because they require very little thinking: setting timers, alarms, reminders, controlling devices, getting the weather.

But let’s talk about HomePod and Siri as a “musicologist” for a moment. An interesting thing about playing music, at least for me, is I often don’t know what it is I want to play. When I was a kid I had a crate of records and a box of cassette tapes. I could easily rattle off 10 to 20 of my current favorites. Overtime it changed and the list grew. But it was always a list I could easily remember. Enter iTunes and eventually Apple Music. My music library has grown by leaps and bounds. My old favorites are still there but they are now surrounded by a seemingly endless stream of possibility. In a very strange way, choosing music is now kind of difficult because it’s overwhelming. On the one hand I absolutely love discovering new music. I’m listening to music I never would have known of were it not for Apple Music. I’ve discovered I actually like certain kinds of jazz. I’m listening to an amazing variety of ambient and electronic music. Through playlists I’ve discovered all sorts of things. But if I don’t have a screen in front of me the chances of remembering much of it is nil. If I’m lucky I might remember the name of a playlist but even that is difficult as there are so many being offered up.

So while music on the HomePod sounds fantastic when it’s playing I often have these moments of “what next?” And in those moments my mind is often blank and I need a screen to see what’s possible. I’m really curious to know how other people who are using voice only music devices decide what they want to play next.

Conclusion There isn’t one. This is the kind of device that I want to have. I’m glad I have it. I enjoy it immensely. It is a superb experience until it isn’t which is when I want to throw it out a window. Hey Apple, thanks?

- Well, sometimes a yell is actually required. ↩︎

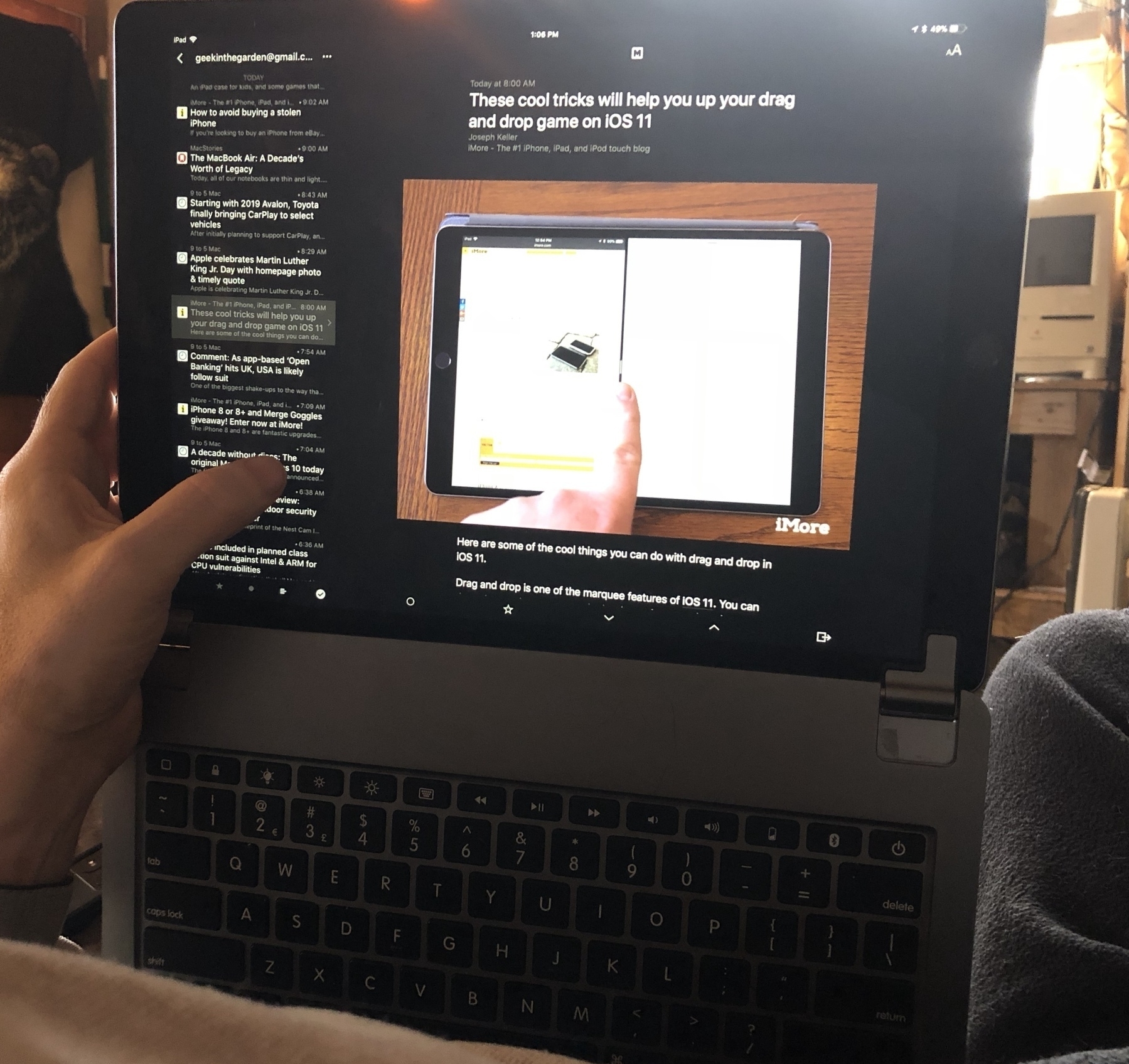

iPad Journal: Using an iPad to maintain websites – my workflow. It’s been two weeks & I’ve seen great improvement in replacing Transmit & Coda with Textastic, FileBrowser, & Files.

Using an iPad to maintain websites - my workflow

A couple weeks ago I wrote about my website managment workflow changing up a bit due to Panic’s recent announcement that they were discontinuing Transmit. To summarize, yes, Transmit will continue to work for the time being and Panic has stated that it will continue developing Coda for iOS. But they’ve been slow to adopt new iOS features such as drag one drop while plenty of others are already offering that support. So, I’ve been checking out my options.

After two weeks with the new workflow on the iPad I can say this was a great decision and I no longer consider it tentative or experimental. This is going to stick and I’m pretty excited about it. I’ve moved Coda off my dock and into a folder. In it’s place are Textastic and FileBrowser. Not only is this going to work, it’s going to be much better than I expected. Here’s why.

iCloud Storage, FTP, Two Pane View Textastic allows for my “local” file storage to be in iCloud. So, unlike Coda, my files are now synced between all devices. Next, Textastic’s built in ftp is excellent. And I get the two pane file browser I’ve gotten used to with Transmit and Coda. Local files on the left, server files on the right. The html editor is excellent and is, for the most part, more responsive than Coda. Also, and this is really nice as it saves me from extra tapping, uploading right from a standard share button within the edit window. Coda requires switching out of the edit window to upload changes.

Drag and Drop Unlike Transmit and Coda, the developers of FileBrowser have implemented excellent drag and drop support. I’ve set-up ftp servers in FileBrowser and now it’s a simple action to select multiple files from practically anywhere and drag them right into my server. Or, just as easily, because I’ve got all of my website projects stored in iCloud I can drag and drop from anywhere right into the appropriate project folder in the Files app then use the ftp server in Textastic to upload. Either way works great. Coda/Transmit do not support drag and drop between apps and are a closed silo. The new workflow is now much more open and with less friction.

Image Display and Editing One benefit of FileBrowser is the display of images. In the file view thumbnails on the remote server are nicely displayed. If I need to browse through a folder of images at a much larger view I can do that too as it has a full screen image display that allows for swiping through. Fantastic and not something offered by Transmit or Coda. Also, from a list view of either Files or FileBrowser, local or remote, I can easily drag and drop an image to import into Affinity Photo for editing. Or, from the list view, I can select the photo to share/copy to Affinity Photo (or any image editor).

Textastic and Files This was another pleasant surprise. While I’ll often get into editing mode and just work from an app, in this case Textastic, every so often I might come at the task from another app. Say, for example, I’ve gotten a new images emailed from a client as happened today. I opened Files into split view with Mail. In two taps I had the project folder open in Files. A simple drag and drop and my images were in the folder they needed to go to. The client also had text in the body of the email for an update to one of his pages. I copied it then tapped the html file in Files which opened the file right up in Textastic. I made the change. Then uploaded the images and html files right from Textastic.

Problems? Thus far I’ve encountered only one oddity with this new workflow and it has to do with this last point of editing Textastic files by selecting them from within the Files app. As far as I can tell, this is not creating a new copy or anything, it is editing the file in place within Textastic. But for any file I’ve accessed via Files it shows a slight variation in the recents file list within Textastic. Same file, but the app seems to be treating it as a different file and it shows up twice in the recent files list. Weird. It is just one file though and my changes are intact regardless of how I’m opening it. As a user it seems like a bug but it may just be “the way it works”.

Using HomeKit

Smart Plugs Last spring I finally purchased my first smart plug, a Homekit compatible plug from KooGeek. It worked. I bought a second. A few weeks later the local Walmart had the isp6 HomeKit compatible plugs from iHome on sale. Only $15. I bought three. My plan was to use these with lights and to have one for my A/C in the summer to be swapped out to the heater in my well-house in the winter. I’m pretty stingy in my use of energy so in the winter I make it a point to keep that heater off and only turn it on when when I must which requires a good bit of effort on my part. I don’t mind the walking out to the well house as I can always use the steps but it’s the mental tracking of it and the occasional forgetting that is bothersome. Having a smart plug makes it convenient to power it on and off but I’m still having to remember to keep tabs.

Automations Enter automations. The Home app gets better with each new version. By using automations it is now possible to automate a scene or a device or multiple devices at specific times or sunset/sunrise or a set time after sunset/sunrise or before. Very handy for a morning light but not too helpful for my well-house heater. But wait, I can also set-up an automation for a plug based on a Homekit sensor such as the iHome 5-in-1 Smart Monitor. I put the monitor in the well-house and create an automation to turn on the heater if the temperature dips to 32. I’ve turned my not-so-smart heater into a smarter one which will keep my water from freezing with no effort from myself. Even better, it will reduce my electricity use because of it’s accuracy.

I have a similar dumb heater in my tiny house as well as a window A/C. I might use the same monitor to more accurately control heating and cooling in here. Currently I do that with constant futzing with controls and looking at a simple analog thermometer. It would be an improvement to just have a set temperature to trigger devices.

Lights I’ve been avoiding purchasing Homekit compatible lights because most, such as those from Phillips, also required purchase of a hub. Also, cost was a bit much. My reasoning being that if I just pick-up smart plugs as they are on sale I can use those for lights or anything else. Cheaper and more versatile. That said, one benefit of the lights is that they can be dimmed which is appealing. So, two weeks ago I picked up one of Sylvania’s Smart bulbs. It works perfectly. I’ll likely get another but in my tiny house I don’t need that many lights so two dimmable bulbs will likely be enough. It’s very nice to be able to ask Siri to set the lights at 40% or 20% or whatever. I have an automation that kicks on the light to 15% at my wake-up time. Very nice to wake up to a very low, soft light. With a simple request I can then ask Siri to raise the brightness when I’m actually ready to get out of bed.

Lighting Automations An hour after sunrise I’ve got another set of LEDs that kick on for all of my houseplants that sit on two shelves by the windows. An hour after sunset those lights go off and at the same time the dimmable light comes on at 50%.

AppleTV as Hub Of course, to really make this work a hub is required. A recent iPad running iOS 10 or one of the newer AppleTVs will work. I’m using the AppleTV because I’ve always got one on. Set-up was easy and I’ve never had to futz with it. The nice thing about this set-up is that I can access my Homekit devices from anywhere. Whether I’m in town or visiting family or out for a walk, checking or changing devices is just a couple taps or request from Siri.

HomePod Last is the device that has not arrived yet. My HomePod is set to arrive February 9. I don’t need it for any of this to work but I suspect it will be a nice addition. Controlling things with Hey Siri has always worked pretty well for me though I suspect it will be even better with HomePod. Will find out soon.

Siri and voice first

In a recent episode of his Vector podcast, Rene Ritchie had “voice first” advocate Brian Roemmele. Rene is probably my current favorite Apple blogger and podcaster and Vector is excellent.

As I listened to this episode I found myself nodding along for much of it. Roemmele is very passionate about voice first computing and certainly seems to know what he’s talking about. In regards to Siri, his primary argument seems to be that Apple made a mistake in holding Siri back after purchasing the company. At the time Siri was an app and had many capabilities that it no longer has. Rather than take Siri further and developing it into a full-fledged platform Apple reigned it in and took a more conservative approach. In the past couple of years it has been adding back in, via Siri Kit, what it calls domains.

Apps adopt SiriKit by building an extension that communicates with Siri, even when your app isn’t running. The extension registers with specific domains and intents that it can handle. For example, a messaging app would likely register to support the Messages domain, and the intent to send a message. Siri handles all of the user interaction, including the voice and natural language recognition, and works with your extension to get information and handle user requests.So, they scaled it back and are rebuilding it. I’m not a developer but my understanding of why they’ve done this is, in part, to allow for a more varied and natural use of language. But as with all things internet and human, people often don’t want to be bothered with the details. They want what they want and they want it yesterday. In contrast to Apple’s handling of Siri we have Amazon which has it’s pedal to the floor.

Roemmele goes on to discuss the rapid emergence of Amazon’s Echo ecosystem and the growth of Alexa. Within the context of this podcast and what I’ve seen of his writing, much of his interest and background seems centered on commerce and payment as they relate to voice. That said, I’m just not that interested in what he calls “voice commerce”. I order from Amazon maybe 6 times a year. Now and in the foreseeable future I get most of what I need from local merchants. That said, even when I do order online I do so visually. I would never order via voice because I have to look at details. Perhaps I would use voice to reorder certain items that need to be replaced such as toilet paper or toothpaste but that’s the extent of it.

What I’m interested in is how voice can be a part of the computing experience. There are those of us that use our computers for work. For the foreseeable future I see myself interacting with my iPad visually because I can’t update a website with my voice. I can’t design a brochure with my voice. I can’t update a spreadsheet with my voice. I can’t even write with my voice because my brain has been trained to write as I read on the screen what it is I’m writing.

But this isn’t the computing Roemmele is discussing. His focus is “voice first devices”, those that don’t even have screens, devices such as the Echo and the upcoming HomePod1. And the tasks he’s suggesting will be done by voice first computing are different. And this is where it get’s a bit murky.

Right now my use of Siri is via the iPhone, iPad, AppleWatch and AirPods. In the near future I’ll have Siri in the HomePod. How do I make the most of voice first computing? What are these tasks that Siri will be able to do for me and why is Roemmele so excited about voice first computing. The obvious stuff would be the sorts of things assistants such as Siri have been touted as being great for: asking about for the weather, adding things to reminders, setting alarms, getting the scores for our favorite sports ball teams and so on. I and many others have written about these sorts of things that Siri has been doing for several years now. But what about the less obvious capabilities?

At one point in the podcast the two discuss using voice for such things as sending text. I often use dictation when I’m walking to dictate a text into my phone when using Messages and I see the benefit of that. But dictation, whether it is dictating to Siri or directly to the Messages app or any other app, at least for me, requires an almost different kind of thinking. It may be that I am alone in this. But it is easier for me to write with my fingers on the keyboard then it is to “write” with my mouth through dictation. It might also be that this is just a matter of retraining my brain. I can see myself dictating basic notes and ideas. But I don’t see myself “writing” via dictation.

At another point Roemmele suggests that apps and devices will eventually disappear as they are replaced by voice. At this point I really have to draw a line. I think this is someone passionate about voice first going off the rails. I think he’s let his excitement cloud his thinking. Holding devices, looking, touching, swiping, typing and reading, these are not going away. He seems to want it both ways though at various points he acknowledges that voice first doesn’t replace apps so much as it is a shift in which voice becomes more important. That I can agree with. I think we’re already there.

Two last points. First, about the tech pundits. Too often people let their own agenda and preference color their predictions and analysis. The lines blur between their hopes and preferences and what is. No one knows the future but too often act as they do. It’s kinda silly.

Second, what seems to be happening with voice computing is simply that a new interface has suddenly become useful and it absolutely seems like magic. For those of us who are science fiction fans it’s a sweet taste of the future in the here and now. But, realistically, its usefulness is currently limited to very fairly trivial daily tasks mentioned above. Useful, convenient and delightful? Yes, absolutely. Two years ago I had to go through all the trouble of putting my finger on a switch, push a button or pull a little chain, now I can simply issue a verbal command. No more trudging through the effort of tapping the weather app icon on my screen, not for me. Think of all the calories I’ll save. I kid, I kid.

But really, as nice an addition as voice is, the vast majority of my time computing will continue to be with a screen. I don’t doubt that voice interactions will become more useful as the underlying foundation improves and I look forward to the improvements. As I’ve written many times, I love Siri and use it every day. I’m just suggesting that in the real world, adoption of the voice interface will be slower and less far reaching than many would like.

- Actually, technically, the HomePod technically has a screen but it’s not a screen in the sense that an iPhone has a screen. ↩︎

Over at Beardy Guy Musings, thinking about how I create & manage websites w/ iPad as my primary device. Can I do it without Panic apps? Yup! Panic, Transmit and Keeping My Options Open

Panic, Transmit and Keeping My Options Open

I’ve been coding websites for the web since 1999 and doing it for clients since 2002. I started using Coda for Mac when the first version came out and when Transmit and Coda became available for iOS I purchased both. When I transitioned to the iPad as my primary computer in 2016 those two apps became the most important on my iPad. But no more.

A couple weeks ago Panic announced that they would no longer be developing Transmit for iOS. They’d hinted in a blog post a year or two ago that iOS development was shaky for them. They say though that Coda for iOS will continue. But I’m going to start trying alternative workflows. In fact, I’ve already put one in place and will be using it for the foreseeable future. Why do this if Coda still works and has stated support for the future?

I’m not an app developer. I’m also not an insider at Panic. But as a user, I find it frustrating that we are over three full months since the release of iOS 11 and seven months since WWDC and Panic’s apps still do not support drag and drop in iOS 11. Plenty of other apps that I use do. I find myself a bit irritated that Panic occupies this pedestal in the Apple nerd community. It’s true that their apps are visually appealing. Great. I agree. But how’s about we add support for important functionality? I really love Coda and Transmit but I just don’t feel the same about Panic as a company. Sometimes it seems like they’ve got plenty of time and resources for whimsy (see their blog for posts about their sign and fake photo company) and that’s great I guess. I guess as a user that depends on their apps I’d rather they focus on the apps. I’m on the outside looking in and it’s their company to do as they please. But as a user I’ll have an opinion based on the information I have. And though they’ve said Coda for iOS will continue, it’s time to test other options.

I’ve been using FileBrowser for three years just as a way to access local files on my MacMini. I’d not thought much about how it might be used as my FTP client for website management in conjunction with Apple’s new Files app. Thanks to Federico’s recent article on FTP clients I was reminded that FileBrowser is actually a very capable ftp app. So, I set-up a couple of my ftp accounts. With this set-up I can easily access my servers on one side of my split screen via FileBrowser and my “local” iCloud site folders in Files on the other side. I really like the feel of it. The Files app is pretty fantastic and being able to rely on that in this set-up is a big plus. It feels more open which brings me to the next essential element in this process: editing html files.

One of my frustrations with Coda and Transmit was that my “local” files were stuck in a shared Coda/Transmit silo. Nice that they were interchangeable between the two but I could not locate them in DropBox or iCloud. With this new set-up I needed a text editor that could work from iCloud as a local file storage. I’ve got two options that I’m starting with, both have built in ftp as well as iCloud as a file storage option. Textastic is my current favorite. Another is GoCoEdit. Both have built in preview or the option to use Safari as a live preview. So, as of now, I open my coding/preview space and use a split between Textastic and Safari. I haven’t used Textastic enough to have a real opinion about how it feels as an editor when compared to Coda’s editor. But thus far it feels pretty good. My initial impression is that navigation within documents is a bit snappier and jumping between documents using the sidebar is as fast as Coda’s top tabs.

So, essentially, this workflow is relying on four apps in split screen mode in two spaces. One space is for file transfer, the other is for coding/previewing. Command Tab gets me quickly back and forth between them. I often get instructions for changes via email or Messages. Same for files such as pdfs and images. In those cases it is easy enough to open Mail or Messages as a third slide over app that I can refer to as I edit or for drag and drop into Files/FileBrowser.

It’s only been a few days with this new 4 app workflow but in the time I’ve used it I like it a lot. I get drag and drop and synched iCloud files (which also means back-up files thanks to the Mac and Time Machine).

Hey Siri, give me the news

Ah, it’s just a little thing but it’s a little thing I’ve really wanted since learning of a similar feature on Alexa. In fact, I just mentioned it in yesterday’s post. We knew this was coming with HomePod and now it’s here for the iPad and iPhone too. Just ask Siri to give you the news and she’ll respond by playing a very brief NPR news podcast. It’s perfect, exactly what I was hoping for. I’ve already made it a habit in the morning, then around lunch and again in the evening.

Alexa Hype

A couple years ago a good friend got one of the first Alexa’s available. I was super excited for them but I held off because I already had Siri. I figured Apple would eventually introduce their own stationary speaker and I’d be fine til then. But as a big fan of Star Trek and Sci-fi generally, I love the idea of always present voice-based assistants that seem to live in the air around us.

I think he and his wife still use their Echo everyday in the ways I’ve seen mentioned elsewhere: playing music, getting the news, setting timers or alarms, checking the weather, controlling lights, checking the time, and shopping from Amazon. From what I gather that is a pretty typical usage for Echo and Google Home owners. That list also fits very well with how I and many people are using Siri. With the exception of getting a news briefing which is not yet a feature. As a Siri user I do all of those things except shop at Amazon.

The tech media has recently gone crazy over the pervasiveness of Alexa at the 2018 CES and the notable absence of Siri and Apple. Ah yes, Apple missed the boat. Siri is practically dead in the water or at least trying to catch-up. It’s a theme that’s been repeated for the past couple years. And really, it’s just silly.

Take this recent story from The Verge reporting on research from NPR and Edison Research

One in six US adults (or around 39 million people) now own a voice-activated smart speaker, according to research from NPR and Edison Research. The Smart Audio Report claims that uptake of these devices over the last three years is “outpacing the adoption rates of smartphones and tablets.” Users spent time using speakers to find restaurants and businesses, playing games, setting timers and alarms, controlling smart home devices, sending messages, ordering food, and listening to music and books.Apple iOS devices with Siri are all over the planet rather than just the three or four countries the Echo is available in. Look, I think it’s great that the Echo exists for people that want to use it. But the tech press needs to pull it’s collective head out of Alexa’s ass and find the larger context and a balance in how it discusses digital assistants.

Here’s another bit from the above article and research:

The survey of just under 2,000 individuals found that the time people spend using their smart speaker replaces time spent with other devices including the radio, smart phone, TV, tablet, computer, and publications like magazines. Over half of respondents also said they use smart speakers even more after the first month of owning one. Around 66 percent of users said they use their speaker to entertain friends and family, mostly to play music but also to ask general questions and check the weather.I can certainly see how a smart speaker is replacing radio as 39% reported in the survey. But to put the rest in context, it seems highly doubtful that people are replacing the other listed sources with a smart speaker. Imagine a scenario where people have their Echo playing music or a news briefing. Are we to believe that they are sitting on a couch staring at a wall while doing so? Doing nothing else? No. The question in the survey: “Is the time you spend using your Smart Speaker replacing any time you used to spend with...?”

So, realistically, the smart speaker replaces other audio devices such as radio but that’s it. People aren’t using it to replace anything else in that list. An Echo, by it’s very nature, can’t replace things which are primarily visual. As fantastic as Alexa is for those that have access to it, for most users it still largely comes down to that handful of uses listed above. In fact, in another recent article on smart speakers, The New York Times throws a bit of cold water on the frenzied excitement: Alexa, We’re Still Trying to Figure Out What to Do With You

The challenge isn’t finding these digitized helpers, it is finding people who use them to do much more than they could with the old clock/radio in the bedroom.Now, back to all the CES related news of the embedding of Alexa in new devices and/or compatibility. I’ve not followed it too closely but I’m curious about how this will actually play out. First, of course, there’s the question of which of these products actually eventually make it to market. CES announcements are notorious for being just announcements for products that never ship or don’t ship for years into the future. But regardless, assuming many of them do, I’m just not sure how it all plays out.A management consulting firm recently looked at heavy users of virtual assistants, defined as people who use one more than three times a day. The firm, called Activate, found that the majority of these users turned to virtual assistants to play music, get the weather, set a timer or ask questions.

Activate also found that the majority of Alexa users had never used more than the basic apps that come with the device, although Amazon said its data suggested that four out of five registered Alexa customers have used at least one of the more than 30,000 “skills” — third-party apps that tap into Alexa’s voice controls to accomplish tasks — it makes available.

I’m imagining a house full of devices many of which have microphones and Alexa embedded in them. How will that actually work? Is the idea to have Alexa, as an agent that listens and responds as she currently does in a speaker, but also in all of the devices be they toilets, mirrors, refrigerators… If so, that seems like overkill and unnecessary costs. Why not just the smart speaker hub that then intelligently connects to devices? Why pay extra for a fridge with a microphone if I have another listening device 10 feet away? This begins to seem a bit comical.

Don’t get me wrong, I do see the value of increasing the capabilities of our devices. I live in rural Missouri and have a well house heater 150 feet away from my tiny house. I now have it attached to a smart plug and it’s a great convenience to be able to ask Siri to turn it off and on when the weather is constantly popping above freezing only to drop below freezing 8 hours later. It’s also very nice to be able to control lights and other appliances with my voice, all through a common voice interface.

But back to CES, the tech press and the popular narrative that Alexa has it all and that Siri is missing out, I just don’t see it. A smart assistant, regardless of the device it lives in, exists to allow us to issue a command or request, and have something done for us. I don’t yet have Apple’s HomePod because it’s not available. But as it is now, I have a watch, an iPhone and two iPads which can be activated via “Hey Siri”. I do this in my home many times a day. I also do it when I’m out walking my dogs. Or when I’m driving or visiting friends or family. I can do it from a store or anywhere I have internet. If we’re going to argue about who is missing out, the Echo and Alexa are stuck at home while Siri continues to work anywhere I go.

So, to summarize, yes, stationary speakers are great in that their far-field microphones work very well to perform a currently limited series of tasks which are also possible with the near-field mics found in iPhones, iPads, AirPods and the AppleWatch. The benefit of the stationary devices are accurate responses when spoken to from anywhere in a room. A whole family can address an Echo whereas only individuals can address Siri in their personal devices and have to be near their phone to do so. Or in the case of wearables such as AirPods or AppleWatch, they have to be on person. By contrast, these stationary devices are useless when we are away from the home when we have mobile devices that still work.

My thought is simply this, contrary to the chorus of the bandwagon, all of these devices are useful in various ways and in various contexts. We don’t have to pick a winner. We don’t have to have a loser. Use the ecosystem(s) that works best for you If it’s Apple and Amazon enjoy them both and use the devices in the scenarios where they work best. If it’s Amazon and Google, do the same. Maybe it’s all three. Again, these are all tools, many of which compliment each other. Enough with the narrow, limiting thinking that we have to rush to the pronouncement of a winner.

Personally, I’m already deeply invested in the Apple ecosystem and I’m not a frequent Amazon customer so I’ve never had a Prime membership. I’m on a limited budget so I’ve been content to stick with Siri on my various mobile devices and wait for the HomePod. But if I were a Prime member I would have purchased an Echo because it would have made sense for me. When the HomePod ships I’ll be first in line. I see the value of a great sounding speaker with more accurate microphones that will give me an even better Siri experience. I won’t be able to order Amazon products with the HomePod but I will have a speaker with fantastic audio playback and Siri which is a trade off I’m willing to make.

Brydge Keyboard Update

It’s been almost two months of using the Brydge keyboard. It seems to be holding up very well in that short time. The only defect I’ve discovered is the right most edge of the space bar does not work. My thumb has to be at least a half inch over to activate a press. Not a deal breaker but it is something I’ve had to adjust.

Also, something positive that I’ve discovered. The Brydge hinges rotate all the way to a parallel position with the keyboard. In other words, the iPad rotates all the way to no angle at all, it just sort of opens all the way, level with the keyboard. I initially thought this would be useless. Why would I ever want to do this?